Scam Alert Texas: Why “It Looked Real” Is No Longer Enough

- Adriana Perez

- 2 days ago

- 9 min read

Scams used to have obvious warning signs. The email was full of typos. The offer looked too good to be true. The caller’s story didn’t make sense. Most of us were taught to spot those red flags and move on.

That world is changing.

Today a scammer can sound like your child. They can send a cloned video of a loved one. They can mimic your boss’s email. They can post a rental listing for a house that isn’t theirs. They can time a fake invoice to hit when you’re under deadline. They know what neighborhood you live in, who your friends are, and what you’re worried about. They use artificial intelligence to make it all look and sound right.

That is what makes the new wave of scams so dangerous. They are no longer just technical problems; they are personal.

For Texas families, homeowners, renters, buyers, sellers and small business owners, that means updating the way we think about safety. The goal is not to live in fear. The goal is to learn how to verify, to take a breath, and to protect each other.

Because in today’s world, “it looked real” is no longer enough.

Why This Matters Now

Cybercrime is not some distant issue that only affects large companies. It hits regular people trying to pay bills, buy homes, apply for rentals, support their kids, and take care of aging parents. The FBI’s 2025 Internet Crime Report shows that Americans submitted more than 1 million complaints and reported losses approaching $21 billion, an increase from 2024. For the first time, the report includes a category for artificial‑intelligence–related fraud, with more than 22 000 complaints and $893 million in losses. Older adults were hit hard: people aged 60 and above reported $7.7 billion in losses.

Scams are hitting social media particularly hard. According to new FTC data released in April 2026, nearly 30 % of people who reported losing money to a scam in 2025 said it started on social media, with reported losses reaching $2.1 billion. Losses from social‑media scams have increased eightfold since 2020, with investment scams accounting for the biggest share and Facebook being the top platform where people reported losing money.

Those numbers are not just statistics. Each complaint is a retiree, widow, parent, homeowner, renter or business owner who thought they were doing the right thing and got taken. Our community cannot afford to ignore them.

The Big Shift: Scams Are Becoming More Human

Artificial intelligence didn’t invent scams. People have been deceiving people for as long as money and trust have existed. What AI has changed is the quality and speed of the deception.

A scammer no longer has to be a good writer; AI can compose the message. They don’t need to be a designer; AI can create a polished image or fake advertisement. They don’t need to speak perfect English; AI can translate and rewrite. They don’t need to spend hours researching you; social media, public records and data breaches give them personal information, and AI tools can sort and personalize it.

CISA notes that poor grammar and spelling used to be a giveaway, but AI can make scam messages look professional. So instead of only asking, “Does this look fake?”, we have to start asking “Can I verify this is real?”. That mental shift is our best defense.

Key terms

Term | Meaning |

Deepfake | Fake or altered media created with AI, including photos, videos or voices. Scammers can use voice cloning with a short audio clip to sound like a real loved one. |

Synthetic content | Information produced or heavily altered by AI. It includes AI-generated articles, images, videos, reviews, profiles and ads. NIST warns that synthetic content can harm information integrity and trust and highlights the need for provenance tracking, watermarking and detection to reduce risks. |

Provenance | The origin or history of a piece of content. Knowing who created it, where it came from and whether AI was involved helps determine whether it can be trusted. |

Human Verification Is Becoming the New Normal

One of the biggest changes on the horizon is the need to prove that a person is who they claim to be.

In past years, people were told to look for obvious glitches in AI‑generated videos—blurry hands, awkward blinking, robotic voices. Those clues still help, but the technology is improving. Some deepfake voices and images are now realistic enough to fool people, especially when combined with emotional pressure.

That’s why we need human verification habits. A few examples:

Family verification phrase: Choose a private word or phrase that your family knows. If someone calls claiming to be a loved one in an emergency, ask for the phrase. Keep it off social media.

Callback rule: If a call or message asks for money, personal information, account access or urgent action, hang up and call back using a number you already trust. This is especially important with vendors, contractors, lenders and title companies.

Wire‑verification rule: Never wire money based solely on email instructions. Confirm the wiring instructions by calling the title company or escrow officer directly. In Texas real estate, wire fraud is one of the fastest‑growing crimes, and verifying instructions via a trusted number has saved many buyers from losing their down payments.

Liveness checks: Some video‑chat tools and identity‑verification systems now ask you to blink, turn your head or speak a random phrase to prove you are not a static image. Use them when available.

These habits may feel awkward at first, but they are small actions that stop scams in their tracks.

The Internet Is Filling Up With AI‑Generated Content

Another trend that affects all of us is the AI feedback loop. AI systems generate content. That content gets posted online. Other AI systems scrape and reuse it. Over time, the internet can fill with polished text, images and videos that sound confident but aren’t based on firsthand knowledge or credible reporting.

NIST’s report on synthetic content stresses the need for transparency tools—provenance tracking, watermarking, labeling, detection and audit mechanisms—to reduce risks. But those tools are still evolving. Meanwhile, it’s easy for fake reviews, fake rental listings, fake investment advice and fake business pages to look real.

For our community, this matters because many Texans rely on online research for home repairs, property listings, job opportunities, loans and social services. We have to check the source, look for multiple confirmations and avoid trusting a single polished piece of content. If something seems too tailored or too perfect, investigate further.

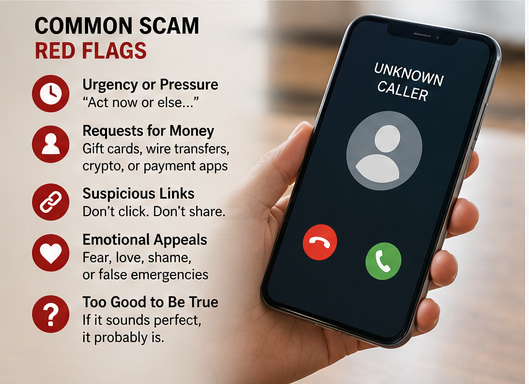

Scams Are Becoming Emotionally Engineered

A scam does not always feel suspicious. Sometimes it feels like you’re rushing to help someone. Sometimes it feels like an urgent deadline. Sometimes it feels like you’ve found the perfect home. Scammers exploit emotions—fear, love, hope, shame, loneliness and excitement—to make us act quickly.

Social engineering is the practice of tricking people into doing something unsafe, such as clicking a link, downloading a file or sharing a code. Emotional engineering takes that further. It adds urgency and secrecy.

Common signs of emotional scams include:

“Your child has been arrested.”

“Your account will close today.”

“I’m in the hospital; I need help.”

“You’ve won, but you must act now.”

“I love you, but I need money.”

“Your package is stuck.”

“You owe taxes and will be arrested.”

“Your bank account is compromised; transfer money to protect it.”

The pressure is intentional. The FTC explains that fake emergency scams often rely on urgency, secrecy and emotional manipulation. When a message makes your heart race, pause. A real emergency or legitimate transaction can survive a callback, a second opinion or a short delay.

Browsers Have Become the New Front Door for Deception

We use our web browsers for everything: searching, shopping, banking, email, research, real‑estate listings, bill payments and business. That makes them a major target. Scammers are not limited to email anymore. They build fake websites, fake ads, fake login pages, fake pop‑ups and malicious browser extensions.

A browser extension is a small add‑on that gives extra features, like coupon finders or screenshot tools. Some are useful, but malicious extensions can steal data, track browsing or redirect searches. Researchers have found that cybercriminals are exploiting the popularity of generative AI tools by creating fake AI‑themed browser extensions that steal data or redirect users. Keeping your browser and its extensions up to date, deleting extensions you don’t use and only installing trusted tools are simple ways to lower risk.

CISA recommends using strong passwords, turning on multifactor authentication, updating software and learning to recognize phishing attempts. These steps might sound boring, but they are the digital equivalent of locking your doors and windows. They won’t stop every threat, but they make you a harder target.

What This Means for Homebuyers, Sellers and Renters

Real estate transactions are especially vulnerable because they involve large sums of money, tight deadlines and multiple parties. We see wire‑fraud attempts in Houston and across Texas, where scammers intercept emails or texts and send fake wiring instructions right before closing. Buyers can lose their entire down payment in minutes.

To protect yourself:

Never rely solely on email for wiring instructions. Call your escrow officer or title company using a verified number.

Do not click on links or reply directly to new account instructions. Independently confirm the details.

Keep your agent, lender and title company aware of any suspicious messages. We are part of your verification team.

Educate your family members. If your parents or children are helping with funds or paperwork, make sure they understand the wire‑verification rule.

Renters face similar risks with fake listings and phantom landlords. Always verify that a rental listing is legitimate before sending money. Use reputable sites, meet in person when possible and check property records. A landlord who refuses to meet or show a property may be a scammer.

Sellers can be targeted by fake investors and contractors. Beware of unsolicited offers that demand upfront fees for “repairs,” “marketing” or “staging.” Work with licensed professionals and verify credentials.

Simple Community Habits to Adopt

Here are practical habits every household can use to reduce risk:

Create a family verification phrase. Use it if someone calls claiming to be a family member in trouble.

Use the callback rule. Don’t act on urgent requests. Call back using a number you know.

Never share login codes. A one‑time code is the key to your account. Legitimate companies rarely ask you to read it back.

Confirm wiring instructions verbally. For real estate, call the title company directly.

Slow down when emotions are high. Pressure is a red flag.

Use strong passwords and multifactor authentication. A password manager can help.

Be careful with browser extensions. Delete what you don’t need and install only from trusted developers.

Talk about scams openly. Sharing experiences helps others avoid traps.

Where to Report Scams

If you or someone you know has been targeted, report it. The FBI urges victims to report to the Internet Crime Complaint Center (ic3.gov). The FTC also encourages reporting at ReportFraud.ftc.gov and provides consumer advice on spotting and avoiding scams. Reporting helps law enforcement track patterns and protect others.

The Bigger Point: We Protect Each Other by Learning Together

Scammers exploit trust, emotion and urgency. They use technology to sound and look legitimate. They target our families, our homes, our businesses and our savings. But we are not powerless. We can slow down, verify and educate each other.

A real emergency can survive a callback. A legitimate business can be reached through an official number. A real title company will confirm wiring instructions. A real family member can answer a private verification question. A real opportunity does not require secrecy or immediate payment.

The safest people online in 2026 and beyond will not be those who think they can spot every fake. They will be those who know how to confirm what is real.

We live in communities where we watch for our neighbors when the storms come and bring food when someone is sick. Digital safety is no different. By talking about these scams, sharing this information and adopting verification habits, we make Texas a harder target for fraudsters and a safer home for everyone.

Disclaimer: This article is provided for general education and community awareness only. It is not legal, financial, cybersecurity, insurance, tax, lending, or real estate advice. Scam methods change quickly, and no article, checklist, or security practice can guarantee protection from fraud. Readers should verify information directly with official agencies, licensed professionals, financial institutions, title companies, lenders, and law enforcement when appropriate.

Real Estate Disclosure: Adriana C. Perez is a Texas real estate license holder affiliated with Surge Realty. Real estate services are provided through the sponsoring broker. Lone Star Living provides educational content for consumers and the community and is not a substitute for representation, a written agreement, or professional advice.

Fair Housing Statement: Lone Star Living and Adriana C. Perez support equal housing opportunity. Content on this website is not intended to express any preference, limitation, or discrimination based on race, color, national origin, religion, sex, familial status, disability, or any other protected class under applicable law.

Fraud Reporting: If you believe you are involved in an active scam or fraud attempt, contact your bank or financial institution immediately. You may also report suspected internet crime to the FBI Internet Crime Complaint Center and report consumer fraud to the Federal Trade Commission.

Comments